The Neglected Variety Dimension

The three classic dimensions of Big Data are volume, velocity and variety. While there has been much focus on addressing the volume and velocity dimensions, the variety dimension was rather neglected for some time (or tackled independently). However, meanwhile most use cases, where large amounts of data are available in a single well-structured data format are already exploited. The music plays now, where we have to aggregate and integrate large amounts of heterogeneous data from different sources – this is exactly the variety dimension. The Linked Data principles emphasizing the holistic identification, representation and linking allow us to address the variety dimension. As a result, similarly as we have with the Web a vast global information system, we can build with the Linked Data principles a vast global distributed data space (or efficiently integrate enterprise data). This is not only a vision, but has started and gains more and more traction as can be seen with the schema.org initiative, European or the International Data Spaces.

From Big Data to Cognitive Data

The three classic dimensions of Big Data are volume, velocity and variety. While there has been much focus on addressing the volume and velocity dimensions (e.g. with distributed data processing frameworks such as Hadoop, Spark, Flink), the variety dimension was rather neglected for some time. We have not only a variety of data formats – e.g. XML, CSV, JSON, relational data, graph data, … – but also data distributed in large value chains or departments in side a company, under different governance regimes, data models etc. etc. Often the data is distributed across dozens, hundreds or in some use cases even thousands of information systems.

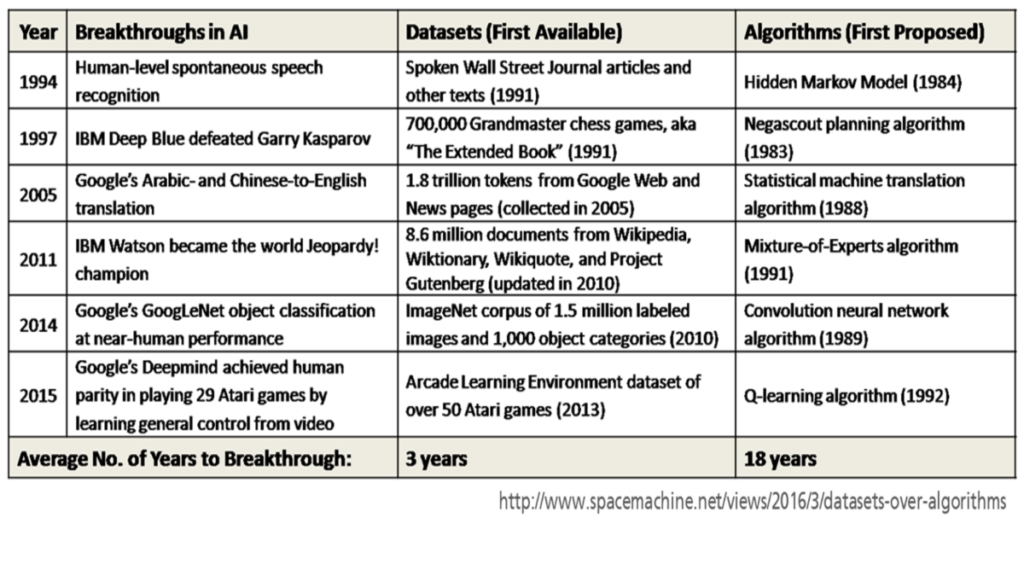

The following figure demonstrates, that the breakthroughs in AI are mainly related to the data – while algorithms were devised early and are relatively old, only once suitable (training) data sets became available, we are able to exploit these AI algorithms.

Another important factor of course is computing power, which thanks to Moore’s law, allows us to efficiently process after every 4-5 years data being a magnitude larger than before.

In order to deal with the variety dimension, we need a lingua franca for data moderation, which allows to:

- uniquely identify small data elements without a central identifier authority. This sounds like a small issue, but identifier clashes are probably the biggest challenge for data integration.

- map from and to a large variety of data models, since there are and always will be a vast number of different specialized data representation and storage mechanisms (relational, graph, XML, JSON and so on and so forth).

- allows distributed, modular data schema definition and incremental schema refinement. The power of agility and collaboration became meanwhile widely acknowledged, but we need to apply this for data and schema creation and evolution.

- deal with schema and data in an integrated way, because what is a schema from one perspective turns out to be data from another one (think of a car product model – its an instance for the engineering department, but the schema for manufacturing).

- allows to generate different perspectives on data, because data is often represented in a way suitable for a particular use case. If we want to exchange and aggregate data more widely, data needs to be represented more independently and flexibly thus abstracting from a particular use case.

The Linked Data principles (coined by Tim Berners-Lee) allow us to exactly deal with these requirements:

- Use Universal Resource Identifiers (URI) to identify the “things” in your data – URIs are almost the same as the URLs we use to identify and locate Web pages and allow us to retrieve and link an the global Web information space. We also do not need a central authority for coining the identifiers, but everyone can create his own URIs simply by using a domain name or Web space under his control as prefix. „Things“ refers here to any physical entity or abstract concept (e.g. products, organizations, locations and their properties/attributes etc.)

- Use http:// URIs so people (and machines) can look them up on the web (or an intra/extranet) – an important aspect is that we can use the identifiers also for retrieving information about them. A nice side effect of this is that we can actually verify the provenance of information by retrieving the information about a particular resource from its original location. This helps to establish trust in the distributed global data space.

- When a URI is looked up, return a description of the thing in the W3C Resource Description Format (RDF) – as we have a unified information representation technique on the Web with HTML, we need a similar mechanism for data. RDF is relatively simple and allows to represent data in a semantic way and to moderate between many different other data models (I will explain this in next weeks article).

- Include links to related things – as we can link between web pages located on different servers or even different ends of the world, we can reuse and link to data items. This is a crucial aspect to reuse data and definitions instead of recreating them over and over again and thus establish a culture of data collaboration.

As a result, similarly as we have with the Web a vast global information system, we can build with these principles a vast global distributed data management system, where we can represent and link data across different information systems. This is not just a vision, but currently already stared to happen, some large scale examples include:

- the schema.org initiative of the major search engines and Web commerce companies, which defined a vast vocabulary for structuring data on the Web (and is used already on a large and growing share of Web pages) and uses GitHub for collaboration on the vocabulary

- Initiatives in the cultural heritage domain such as Europeana, where many thousands of memory organizations (libraries, archives, museums) integrate and link data describing the artifacts.

- the International Data Spaces Initiative, aiming to facilitate the distributed data exchange in enterprise value networks thus establishing data sovereignty for enterprises.

Text by: Prof. Dr. Sören Auer, Technische Informationsbibliothek, L3S Research Center

Sören Auer’s blog on tib.eu: Link

Sören Auer’s articles on LinkedIn: Link